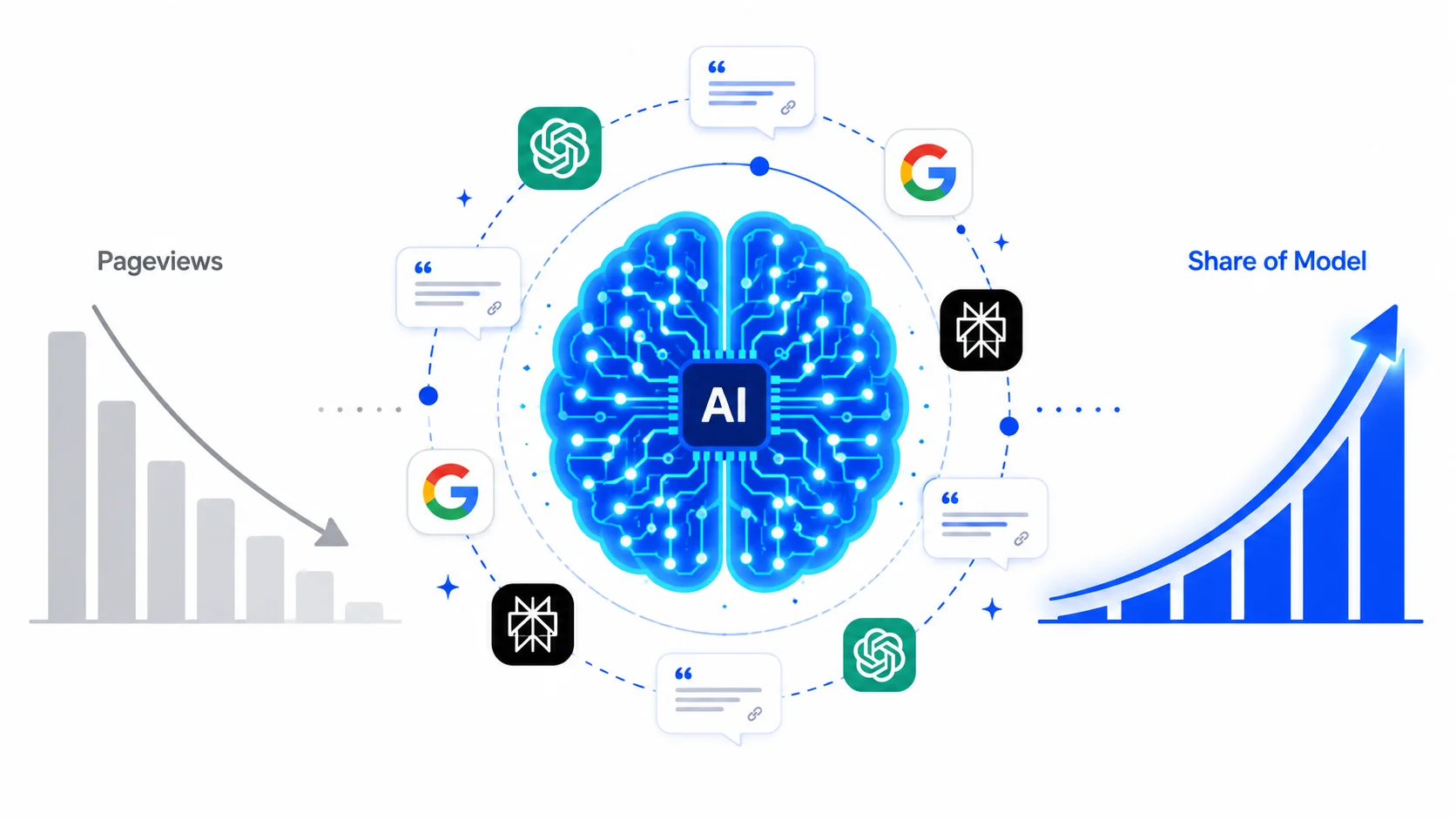

Share of Model: The New KPI Replacing Pageviews

For two decades, pageviews were the number that ended arguments. You published content, traffic went up, and the content program was working. The logic was tight. Search engines ranked your pages, readers clicked, and the click was the proof. The measurement model was clean because the user journey was linear: search, click, read, convert.

That journey no longer exists for the majority of your buyers. With the dominance of Google AI Overviews, Perplexity's answer engine, and the integration of SearchGPT, the user journey no longer guarantees a click. Over 60% of informational B2B queries now end without a click to a website. That is not a rounding error. That is the majority of your potential audience getting their answer from an AI summary and moving on.

When there is no click, there is no pageview. When there is no pageview, the metric that ran B2B content programs for two decades records nothing. The buyer engaged with your category, received an answer, formed an opinion about which vendors exist and which do not, and your analytics dashboard reported zero. The measurement model did not just undercount. It went blind.

Share of Model is the metric built for that reality. Share of Model is the percentage of AI-generated responses in which your brand appears when relevant prompts are tested across generative platforms. It measures how often your brand is included in generative AI outputs compared to competitors when answering relevant prompts. If you test 100 relevant prompts and your brand appears in 37 responses, your Share of Model is 37%. That number tells you something pageviews never could: whether the systems that are now shaping buyer perception know your brand exists.

Why Pageviews Stopped Working Before Most Teams Noticed

The decline of pageviews as a meaningful B2B KPI did not happen overnight. It happened in stages, each one eroding a different part of the metric's reliability, until the number that marketing teams had optimized for years became a proxy for nothing in particular.

The first erosion was zero-click search. Zero-click searches, where users receive a direct AI-generated answer and never click on a website, already account for 59.7% of all Google queries in Europe. When Google AI Mode is active, this figure rises to 93%. A content team tracking pageviews through this period saw traffic numbers hold relatively stable because searchers were still arriving from queries that did not trigger AI summaries.

But the queries that mattered most, the high-intent, category-defining, vendor-evaluation queries, were precisely the ones most likely to receive an AI answer and no click. The traffic that remained was increasingly low-intent. The traffic that disappeared was the traffic that converted.

The second erosion was dark traffic. AI-driven visits rarely arrive with clean referral tags. Instead, they often appear as direct traffic in analytics tools. Buyers who encountered your brand in an AI-generated answer, remembered it, and typed your URL directly into their browser showed up in analytics as direct traffic.

The content that drove the AI citation, the blog post, the data report, and the structured definition page received no attribution credit. Pageviews did not record the interaction. The content team had no signal that the asset was working.

The third erosion was the winner-takes-most dynamic inside AI responses. In an AI Overview, if you are not one of the top two or three cited sources or the primary entity mentioned in the synthesis, you effectively do not exist. Share of Model is the only accurate way to gauge brand health in a zero-click ecosystem.

A brand ranking in position four on a traditional SERP still received 5 to 8% of clicks. A brand absent from an AI-generated response received nothing, and its pageview count reflected that absence as a flat line rather than a loss.

By the time most B2B marketing teams acknowledged the problem, they had spent 12 to 18 months optimizing for a metric that was measuring the wrong surface.

What Share of Model Actually Measures

The formula for Share of Model is straightforward. Share of Model measures the percentage of AI-generated responses that mention your brand, compared to total brand mentions in your category when relevant questions are asked across AI platforms like ChatGPT, Gemini, and Perplexity. Expressed as: your brand mentions divided by total category mentions, multiplied by 100.

That formula produces a number between 0 and 100. But the number is only as meaningful as the prompt library used to generate it. A Share of Model score built on ten generic prompts tells you almost nothing.

A Share of Model score built on 200 prompts that map to your actual buyer's evaluation queries, covering problem-aware searches, category comparisons, vendor shortlists, and implementation questions, gives you a genuine read on your generative visibility across the full buyer journey.

A brand with 12% Share of models in its category appears in roughly 1 in 8 relevant AI responses. Tracking Share of Model means running hundreds of category-level queries across ChatGPT, which holds roughly 64% of the conversational AI market, Gemini, and Perplexity, then calculating how often your brand surfaces.

Three dimensions of Share of Model matter beyond the raw percentage.

Mention frequency is the base rate: how often your brand appears in AI responses at all. This is the recall metric. A brand that appears in 30% of relevant responses is being considered by the AI systems your buyers are using. A brand that appears in 5% is functionally absent from the generative layer, regardless of its organic search rankings.

Positional authority measures where your brand appears in the response. An AI summary that lists six vendors and puts your brand fifth is not equivalent to a response where your brand is the primary recommendation. Share of Model tracks not just how frequently a brand appears, but how favorably, measuring sentiment of the mention as a recommendation versus a secondary reference.

Entity alignment measures whether the AI's description of your brand matches the positioning you want to own. If you are a premium enterprise tool but AI keeps framing you as a budget alternative, that is an entity drift problem. It needs fixing at the content and PR level, not just the technical level. Share of Model without entity alignment analysis tells you that the AI knows your brand. It does not tell you whether the AI is saying the right things about it.

| Metric | What It Measures | What It Misses |

|---|---|---|

| Pageviews | Clicks received | Zero-click impressions, AI citations, dark traffic |

| Share of Voice | Paid and organic reach vs. competitors | Generative visibility, LLM inclusion |

| Share of Search | Query volume for your brand vs. competitors | AI-generated answer inclusion |

| Share of Model | Brand inclusion rate across AI platforms | Click-through behavior, direct conversion |

| AI brand visibility (combined) | Citation frequency + sentiment + positional authority | None, if tracked holistically |

The Conversion Gap That Changes the Budget Argument

The reason Share of Model deserves immediate budget attention is not that pageviews are declining. It is that the traffic AI search sends converts at a rate that makes every other channel look inefficient.

AI referral traffic converts at 14.2%, compared to 2.8% for traditional Google searches. That is five times higher. 58% of marketers say that while search traffic is down, AI referral traffic has much higher intent, so visitors who do arrive on your site are much further along in their buying journeys than they used to be.

The implication is significant. A content program that earns 10,000 pageviews from traditional organic search at a 2.8% conversion rate produces 280 leads. A content program that earns 2,000 visits from AI referral traffic at a 14.2% conversion rate produces 284 leads, from one-fifth of the traffic volume. The team tracking pageviews sees a traffic decline and reports a problem. The team tracking Share of Model and AI-driven conversion sees a channel shift and reports an opportunity.

AI referral traffic is growing by 700% annually. The denominator of that growth rate will change as the channel matures, but the directional signal is unambiguous: the surface that was not tracked on last year's marketing dashboard is the surface driving the highest-intent buyer behavior in 2026.

B2B companies that track content-attributed pipeline, not just traffic, report 2.3x higher executive confidence in content budgets. The statistic that saves your budget is not pageviews. It is the dollar figure attached to content-sourced deals. Share of Model is the upstream metric that predicts the dollar figure in a zero-click environment.

Generative Engine Optimization: How You Improve Your Share of Model Score

Share of Model is a measurement. Generative engine optimization is the practice of improving it. Generative engine optimization is the systematic process of producing, structuring, and distributing content so that AI systems cite, recommend, and reference your brand consistently across relevant query categories.

The distinction between generative engine optimization and traditional SEO is not tactical. It is architectural. SEO is the practice of optimizing content to rank a URL in a list of search results, with primary metrics of rankings, organic traffic, and click-through rate. Generative engine optimization focuses on Share of Model, semantic authority, and citation frequency rather than simple rank position.

Four factors drive Share of Model improvement directly.

Citation velocity from third-party sources. Third-party mentions are now roughly 3 times more correlated with AI visibility than traditional backlinks. An article on your own blog, however well-structured, carries less generative weight than a mention in an industry publication, an analyst report, or a journalist's piece. The generative engine optimization implication is that earned media is not a PR vanity exercise. It is the primary input to AI brand visibility.

Content freshness. AirOps research found that more than 70% of pages cited by AI were updated within the last 12 months. Regular refresh cycles give content a stronger chance to remain part of AI answers. Generative engine optimization requires an ongoing refresh calendar, not a one-time publication program. An asset that earns AI citations in January and is not updated for 14 months will lose generative visibility as newer, fresher content fills the same query space.

Structured content architecture. AI systems extract discrete, verifiable facts more reliably from pages with explicit definitions, comparison tables, and FAQ schema than from unstructured prose. A generative engine optimization content brief specifies the definition in the first 200 words, the comparison table in the second section, and the FAQ layer in the final section, regardless of what the piece is about. This is not a stylistic preference. It is a citation probability decision.

Prompt-specific topical authority. AI systems detect patterns of authority based on how frequently and consistently a brand is associated with specific topics. Scattered content across unrelated topics weakens semantic positioning. A brand that publishes 40 pieces on a single topic cluster builds a stronger entity association for that topic than a brand that publishes 200 pieces across 50 unrelated categories. Generative engine optimization concentrates content investment into the topic clusters where the Share of Model improvement will have the highest commercial impact.

Building the Share of Model Measurement Stack

The primary challenge with Share of Model is that no platform equivalent to Google Search Console exists for generative visibility. Because platforms like ChatGPT do not provide a Search Console, brands must use Share of Model tracking tools that use synthetic prompting to fire thousands of variations of user questions into the model, then analyze the responses to determine how often the brand is mentioned, where it appears in the list, and the sentiment of the mention.

Several purpose-built tools have emerged for this.

Profound has received $155 million in funding and counts over 10% of Fortune 500 companies as customers. Otterly.ai provides multi-platform coverage, and Semrush has integrated AI visibility tracking into its existing dashboard for teams that want AI search metrics alongside traditional SEO data.

For teams not ready to invest in dedicated AI search metrics platforms, a structured manual process produces usable baseline data. Build a prompt library of 50 to 100 queries that map to your buyers' actual evaluation language. Cover four prompt categories: problem-aware queries, category comparison queries, vendor shortlist queries, and implementation questions.

Run each prompt across ChatGPT, Perplexity, and Google AI Overviews. Record whether your brand appears, where it appears, and how it is described. Do this monthly. The trend line across three months of manual prompting will reveal whether your generative engine optimization investment is moving the score.

Only 30% of brands stay visible from one AI answer to the next, and just 20% remain visible across five consecutive runs. That level of volatility makes one-off checks meaningless and continuous measurement essential.

| AI Search Metric | What to Track | Minimum Tracking Cadence |

|---|---|---|

| Share of Model | Brand mention rate across 100+ prompts | Monthly |

| Generative Inclusion Rate | % of industry prompts returning brand mention | Monthly |

| Positional authority | Average position in responses where the brand appears | Quarterly |

| Entity alignment | Accuracy of brand description in AI responses | Quarterly |

| AI-driven traffic conversion | Conversion rate of sessions from AI referral | Weekly |

| Citation velocity | New third-party mentions indexed by AI platforms | Monthly |

The Benchmarks That Tell You Where You Stand

Share of Model benchmarks are still early-stage, but practitioner data is producing a working framework. If you are a market leader in your category and you are appearing in fewer than 30% of relevant AI responses, that is a problem worth addressing. If you are a smaller brand and you are appearing in 20% of responses while the category leader appears in 50%, that gap is your roadmap.

Brand Share of the model varies significantly across AI platforms. Ariel commands nearly 24% of mentions on Meta's Llama but less than 1% on Google's Gemini. Chanteclair enjoys a 19% Share of the model on Perplexity but disappears completely from Llama. This platform variance is not an anomaly. It reflects different training data compositions, different recency weighting, and different citation architectures across models. It also means a single-platform Share of Model score is insufficient. Multi-platform tracking is the minimum viable measurement standard.

The competitive intelligence dimension of Share of Model is where the metric earns its place on the CMO dashboard. A brand appearing in 22% of relevant AI responses knows its score. A brand that also knows its primary competitor appears in 48% of the same responses knows its gap, its urgency, and its generative engine optimization priority. Knowing you appear in 30% of responses means little unless you know your main competitor appears in 60%. Always track competitors.

The Dashboard Rebuild: Adding Share of Model Without Removing What Works

Share of Model does not replace every existing metric. It replaces pageviews as the primary content visibility KPI and adds a measurement layer that the rest of the dashboard cannot cover.

The 2026 B2B marketing measurement stack runs four layers in parallel. Pageviews and organic traffic remain in the stack as channel health indicators, not primary success metrics. Pipeline-attributed content performance, which connects specific assets to closed revenue via CRM integration, remains the conversion anchor. Share of Voice across paid and earned media remains the competitive reach indicator.

Share of Model sits above all of them as the forward-looking visibility metric, predicting which brands will capture buyer consideration before buyers begin active comparison.

Share of Model serves as a forward-looking indicator. If AI systems consistently recommend your brand, you are positioned to capture future demand, even before users begin active comparison. That forward-looking quality is what distinguishes it from every metric it displaces. Pageviews told you what happened last month. Share of Model tells you what your pipeline will look like in the next quarter, before the deals show up in your CRM.

The Baseline That Cannot Wait

Start by querying your brand name and your core service categories across ChatGPT, Perplexity, Google AI Mode, and Gemini. Document what comes back. Are you cited? Are you mentioned? Is the information accurate? Do this for your top three competitors, too. This manual audit takes half a day. It will reveal gaps you did not know existed and competitive positions you had no way of seeing inside your current analytics dashboard.

The brands that moved early on Share of Model tracking have a compounding advantage now: they have baseline data from 12 months ago, trend lines that show direction, and generative engine optimization programs already running. The brands starting today are not too late, but they are starting without a baseline, which means the first three months of measurement are overhead before improvement becomes visible.

Set your baseline this week. Run 50 prompts. Record the scores. The number you see is not a judgment on your content program. It is the starting point for the measurement model that will replace the one that stopped working when your buyers stopped clicking.

Need expert content support? LexiConn has been India's B2B content partner since 2009, building content systems for leading enterprise brands across BFSI, technology, and media. Explore our content strategy services →